It happened again today. After helping a client build an amazingly effective natural, content-based link building strategy, links started pouring in and the complaints began. “The Page Authority isn’t high enough. The Domain Authority isn’t high enough.” Every time I hear this, I am reminded of a great presentation I saw from Wil Reynolds at Seer Interactive early in my career. He paraphrased it in an interview on Vertical Measures.

Anyway, what I learned with this competitive space for this client was that the links they got from the New York Times, Shape magazine, Cosmo, Oprah magazine, Men’s Health, and every other major magazine about health in about a six month period did nothing to lift their rankings…today they rank between 5-7, back when they got all these links from these major magazines they did not improve, they stayed in the low 20’s

Wil Reynolds

What Wil poignantly described is this fascination we have with big wins, when the accumulation of small wins is what succeeds in the end. Unfortunately, even the best minds and communicators in our industry, of which I count Wil, seem to be incapable of ridding our industry of this pervasive myth that we just need a couple of “really good links” (whatever that may mean) instead of a diverse set of natural links.

Well, I might not have a way with words like Wil, but I have a way with data. So this morning, chip fully implanted on my shoulder, I decided to dig into the question of what natural links really look like.

Wikipedia as our Model

Many years ago I wrote a piece on Moz where we looked at different features of inbound links to determine whether or not a particular site had an unrealistic link graph. Essentially, we considered Wikipedia as having the ideal model of inbound links, and compared other link profiles to that of a random selection of Wikipedia pages. Wikipedia is a fairly strong model because nearly all of its links are completely natural and non-commercial in nature. So, what does the Wikipedia link profile look like in terms of Page Authority and Domain Authority? We analyzed 2500 randomly selected Wikipedia pages with backlinks (ignoring those with no backlinks at all).

Let’s start with the basic statistics…

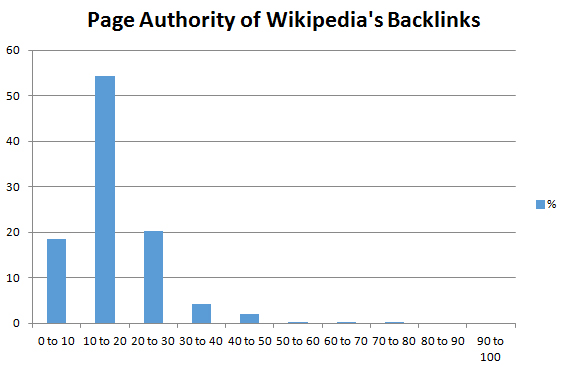

The average (mean) link pointing to a Wikipedia page has a Page Authority of 16.79 and a Domain Authority of 30.42. Were you expecting something higher, lower? Well, we can’t just stop here. What is the standard deviation? The standard deviation on Page Authority is 8.39 and Domain Authority is 18.14. That is huge! Is there a skew in the data set which is making for a non-normal distribution? It turns out, the median is lower at 14.63 Page Authority and 24.29 Domain Authority. Ok, so now we are getting somewhere. The average numbers are higher because they are skewed by a few very high domains, but most links are much smaller. Let’s see if we can visualize this to get a better perspective.

Take a look closely. Nearly 75% of Wikipedia’s inbound links have a Page Authority of less than 20! In fact, if you get a backlink with a Page Authority of 25, your new link is better in terms of Page Authority than over 80% of the inbound links to Wikipedia. If it is above 30, you have bested 90% of Wikipedia’s backlinks. Given that Page Authority correlates far better with rankings than domain authority, you should be golden with that kind of link, right? You would think that Wikipedia must be suffering horribly given their terrible Page Authority of inbound links.

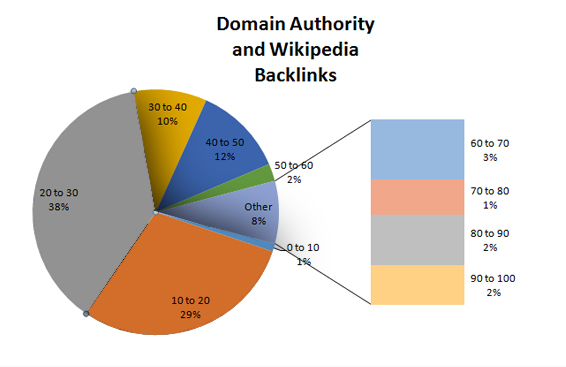

What about Domain Authority? Let’s try a different visualization.

Once again, take a close look. Nearly 70% of all inbound links to Wikipedia come from domains with less than 30 Domain Authority. 30% come from those with less than 20 (the vast majority of which are between 20 and 30). In fact, given the standard deviation we discussed earlier, anything above a Domain Authority of 12 is within 1 standard deviation of the mean, although much lower would be expected given the skewed tail of the curve.

So, What Does This Mean?

Stop obsessing over metrics and start obsessing over natural. Get natural links. Just get them. Produce great content, do great outreach, build relationships, and get more links. Every second you spend elephant hunting, trying to get that single awesome link, is almost certainly a complete waste of your time. Quality does matter, but less in the sense of influence, and more in the sense of legitimacy. When it comes to links, Google wants to be a democracy, not an oligarchy.

The next time someone tries to complain to you about getting high metric links, point them to this post. Ask them if Wikipedia is suffering because so many of their links have low metrics. Or better yet, if they are a competitor, don’t tell them a thing. Let them keep wasting their time and money. You just keep building links the Wikipedia way.

JUMP TO A CATEGORYWEB ANALYTICS | SEARCH OPTIMIZATION | PAID ADVERTISING | COMPANY NEWS