TL;DR: Understanding Grounding Queries in Bing’s AI Performance Report to Dominate LLM Visibility

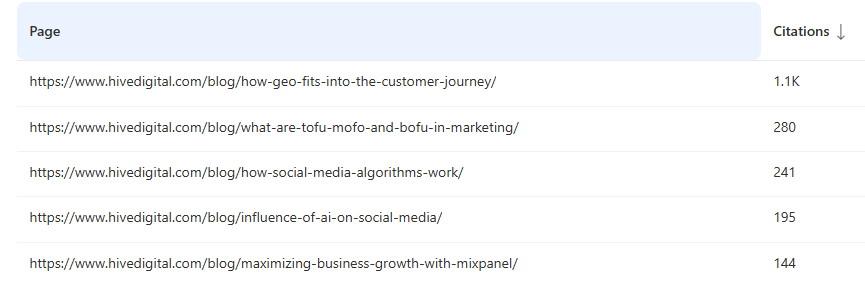

The launch of Bing’s AI Performance tool has exposed a massive Visibility Gap. Our research into Hive Digital’s top-performing GEO blog post shows that citations are not a proxy for human search traffic. While the article received over 1,000 citations for specific grounding queries, it registered nearly zero impressions in traditional Bing search. This suggests that the majority of current AI Search activity is driven by programmatic tools and LLM query fan-outs rather than human users.

The Architecture of Grounding Queries

To optimize for AI, we must first understand that the prompt is not the query. When a user enters a prompt into an LLM, the model performs a Query Fan-out (QFO); learn more about the query fan-out method here.

- Prompt: “What are the best SEO tools for 2026?”

- Grounding Queries: The LLM breaks this down into multiple searches like “top-rated SEO software 2026,” “SEO tool comparisons,” or “expert reviews of Hive Digital tools.”

These grounding queries are designed to fetch external data to prevent hallucinations, and oftentimes, these queries often exhibit “language drift,” where the LLM’s search terms vary slightly but stay within a specific semantic neighborhood.

The Case Study: Hive Digital vs. The AI Ecosystem

The article “How GEO Fits Into the Customer Journey“ serves as the perfect baseline for this phenomenon.

Data Discrepancy:

-

- Bing AI Citations: 1,064 Citations across two primary grounding queries.

- Bing Search Performance: 3 Impressions total over the same 3-month period.

- Google Search Console (GSC): 452 Impressions / 6 Clicks.

Analysis: This 333x difference between Bing citations and Bing impressions confirms that Copilot is retrieving this content for its answers, but users are not finding the page through the traditional “Blue Link” search interface.

Anatomy of the “Grounding Query”

The citations were triggered by highly structured phrases: “AI search optimization GEO platforms customer journey awareness consideration decision tracking” (716 Citations) & “AI search optimization GEO platforms customer journey tracking awareness consideration decision” (348 Citations). These are not natural human queries. As noted in the Bing Webmaster Blog, grounding queries are retrieval phrases used by the AI, not the original user prompt (Microsoft Bing – Introducing AI Performance in Bing Webmaster Tools). These specific, repetitive strings strongly indicate tool-driven probes, likely GEO monitoring platforms or automated brand affinity reports, scanning the web to evaluate how marketing companies, like Hive Digital, discuss these topics.

Grow with Hive SEO Services

In the ever shift AI-landscape, where Large Language Models and generative search dominate the way people interact with brands, one thing remains true - You need to remain visible in all the right channels. Hive's team of expert SEOs have years of experience adapting to change in the way people search. We'll combine technical SEO expertise, expert content writing, and advanced AI engineering to make sure you stay relevant in the new world of search.

Discover Hive SEO ServicesCan We Trust Bing’s AI Performance Data?

The data is technically accurate but contextually misleading if viewed through a traditional SEO lens.

- Why it is accurate: It records every time the Bing index was queried by an LLM (Copilot, ChatGPT, etc.) to fetch your URL for a “grounding” step.

- Why it requires caution: A “Citation” does not equal a “Click” or even a “Human View.” It signifies that your content was “read” by a machine to inform a response.

How to Validate the AI Performance Data in Bing Webmaster Tools

To confirm if these citations represent real users or bots, SEOs must move beyond the dashboard:

- Server Log Analysis: Check your logs for specific User Agents. Look for BingBot (with the BingPreview tag) or specialized AI crawlers (e.g., OAI-SearchBot) occurring at the same timestamps as the citation spikes.

- Query Pattern Recognition: Human queries in GSC (e.g., “how do ai search optimization/geo platforms track insights…”) show natural syntax. Structured, comma-heavy strings in Bing are almost certainly programmatic.

- Referral Tracking: Cross-reference Bing’s data with GA4 referral source data, as this will be the biggest source of truth for actual human visitors to your website through citation links in LLM platforms.

How to Rank in LLMs

While some argue GEO is a distinct discipline, it remains fundamentally rooted in SEO. To rank in an LLM, you must first rank in the search engines that LLMs use for grounding (Bing, Google, Perplexity).

Tactical Recommendations for SEOs:

- Identify Language Drift: Use Bing’s AI Performance data to find common grounding query patterns that differ from your traditional keyword list.

- Optimize for Tool-Based Queries: Incorporate terms like “evaluate,” “monitor,” or “comparison” to capture the traffic generated by automated GEO tools and brand monitors.

- High-Visibility Sections: Place these grounding keywords high in the HTML (H2s or the first paragraph) to ensure LLM “scrapers” prioritize the content.

- Satellite Sites: Targeted pages that target specific queries (such as comparison of your brand vs competitor’s brand) can be used to inject specific narratives into the LLM’s knowledge base.

Bridging the Visibility Gap

The data is clear: the traditional search funnel has been compressed into a retrieval layer. As our case study demonstrates, appearing in an LLM’s grounding process is an entirely different mechanical challenge than ranking for a “blue link.” If your brand is being cited thousands of times but receiving zero traffic, you aren’t just missing out on clicks, you are allowing machines to synthesize your brand narrative without a direct path to conversion.

Navigating this “Visibility Gap” requires more than just keyword research; it requires a deep understanding of query fan-outs, language drift, and the technical signals that trigger LLM citations. At Hive Digital, we don’t just monitor these shifts, we architect content to dominate them.

Whether you are looking to secure your brand’s reputation in AI summaries or capture the programmatic traffic of the next generation of search, our team is at the forefront of Generative Engine Optimization.

Explore our AI Search and LLMO Services to see how we can align your brand with the future of AI retrieval.

Frequently Asked Questions (FAQs)

- What is the difference between a user prompt and a grounding query?

A user prompt is the natural language question a human asks an AI (e.g., “Find me a sustainable SEO agency”). A grounding query is the technical, search-engine-friendly sub-query generated by the LLM to find facts to support its answer. As noted in our Hive Digital case study, these are often highly structured, repetitive strings that do not resemble natural human speech.

- Why does my Bing AI Performance report show high citations but low traffic?

A citation occurs when an LLM retrieves your content to “ground” its response. This does not always result in a clickable link presented to the user. In many cases, the AI uses your data to synthesize an answer and provides a citation footnote that users rarely click. This creates a high volume of “machine impressions” with minimal “human clicks,” a phenomenon we call the Visibility Gap.

- Can I trust the grounding query data in Bing Webmaster Tools?

The data is technically accurate in that it records retrieval events, but it is contextually complex. Many grounding queries are triggered by automated GEO monitoring tools rather than real humans. To validate the “truth” of this data, you must cross-reference it with Server Log Analysis and GA4 referral data to distinguish between programmatic probes and actual human visitors.

- How does GEO differ from traditional SEO?

Traditional SEO focuses on ranking for human intent within a search engine’s results page. GEO (Generative Engine Optimization) focuses on making content highly “extractable” and “authoritative” for LLMs. While GEO is rooted in SEO fundamentals, like structured data and clear headings, it places a higher priority on influencing the “Query Fan-out” process to ensure your brand is selected as a primary source during the AI’s grounding phase.

- Are these “programmatic” queries a bad thing?

Not necessarily. While they don’t drive immediate traffic, they influence the “Knowledge Base” of the LLM. If your site is the primary source for a GEO tool’s brand evaluation, your brand will be perceived as more authoritative by the LLM, leading to better sentiment and more frequent mentions in future human-facing AI responses.