The onset of manually-applied Google penalties had many internet marketers scrambling to remove once-valuable links that were now threatening to devalue their clients’ and their own websites. Fortunately for these webmasters, Google released the disavow tool in October of 2012, which helped webmasters inform Google of URLs and domains where unnatural links exist and cannot be removed due to extenuating circumstances. Some of these situations can include unresponsive webmasters, ransom requests (you can read about my opinion of these link removal payments here), or inconsolable webmasters who think the links are perfectly fine and berate you for even contacting them with a link removal request (these happen more often than not).

Should I Remove or Disavow Unnatural Links?

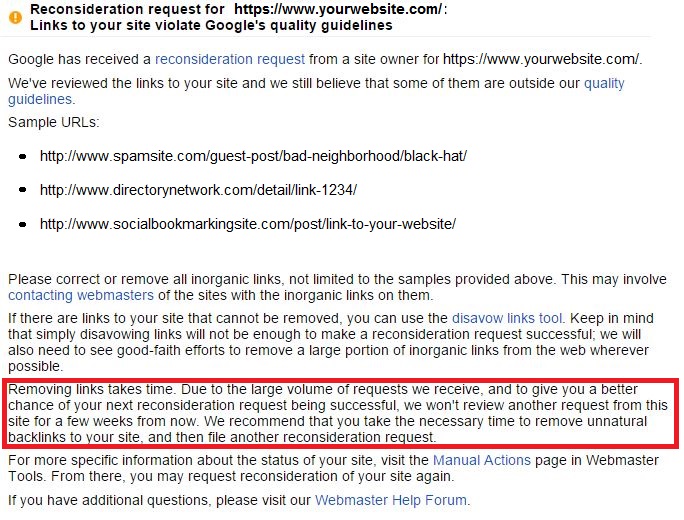

Unfortunately, many webmasters have abandoned removing links (and cleaning up the spam they had spread across the Internet) in exchange for disavowing all the bad domains / URLs, and submitting multiple reconsideration requests until the guys / gals at Google are beaten down enough to approve their site for full re-inclusion. There are also several companies out there who have suggested to their clients that you do not need to remove links to properly handle a penalty. However, this methodology is erroneous, as in the reconsideration request rejection letters, Google states the following, “Removing links takes time…. We recommend that you take the necessary time to remove unnatural backlinks to your site, and then file another reconsideration request.” (see the screenshot below)

If you have received one of these messages after submitting a reconsideration letter to Google, then you need to go back and perform a few tasks. First you should contact the websites that Google has provided in the rejection letter. Next, you should re-analyze the back-links to your website to see if any new data is provided in the exports from Google Webmaster Tools. More times than not, the Latest Links export from Google WMT will only provide a percentage of the link data they have in their index, so it may be necessary to analyze the back-links a few times, even if you use multiple sources. You should contact any new sites found in the re-analysis, and THEN submit a disavow file to Google that contains the sites which were unresponsive, asking for payment, or outright refusing to remove the links.

Check out this video from Matt Cutts highlighting common mistakes that webmasters make using the disavow tool:

Hive Marketing Services

Hive's team of expert digital marketers are ready to support you across the marketing mix. From SEO and paid advertising to social media management and data reporting, we're an extension of your digital team!

Discover How

Which is More Effective? Removing or Disavowing?

Removing links tends to be more effective at alleviating algorithmic filter penalties as well. Disavowal can take days, weeks, even months to have an effect, whereas once you remove a link, you can request Google to re-crawl the page, and they will re-index the page without the linkage, terminating the “link juice” from the unnaturally linking site.

The biggest issue we’ve seen with leaving unnatural links alone is that they have the chance to spawn more links. For example, let’s say you used an article submission service, and those links were syndicated across 100’s of sites. Over time, scraper sites are indexing those pages and duplicating the content. Ultimately those links will re-appear in your back-link profile to Google unless you are monitoring and disavowing on an ongoing basis. Even then, the damage could be done before you get to the linking websites. If the link is removed at the source, then that is one less link you have to worry about later due to scraping and syndication.

The road to penalty recovery is not easy. Link removal outreach is not an easy process, but is required to deal with a manual penalty against your website. The most important quote to take away from Matt Cutts during his tenure as Head of Google’s Web-spam team was this: “Before using the disavow tool, one is expected to make multiple link removal requests on their own. Only after there is a small fraction of links left to remove, should one use the tool.”

JUMP TO A CATEGORY

WEB ANALYTICS | SEARCH OPTIMIZATION | PAID ADVERTISING | COMPANY NEWS